From Hype to Reality

The first wave of AI in DAM was, to put it charitably, enthusiastic. Vendors rushed to add AI-powered capabilities: auto-tagging, object recognition, background removal, on-brand image generation. The feature lists grew long and the demos were impressive.

Reality pushed back.

Auto-tagging turned out to be inconsistent. Models trained on general-purpose datasets rarely understood brand-specific context. What people expected was a system that truly understood the image content and would be able to assign the asset to an existing product catalogue node by coming up with the correct context-aware metadata tags. What they got was a list of generic tags that needed cleaning up.

AI-generated images raised immediate concerns around authenticity, copyright, and brand consistency. And the tools built to check for “on-brand” compliance often passed images that were technically compliant but culturally off. They could verify a logo placement or approve colour usage, but could not tell you whether an image was appropriate for a specific market.

Content provenance became its own problem. Standards like C2PA exist to track the origin and modification history of generated content, but they only work if the standard is supported by both the content creators and the content consumers. DAM should play an important role here, but that is a topic for another article.

That said, it is worth being clear about where AI genuinely delivers in a DAM context, because there is real value here. Computer vision tasks, such as smart cropping, smart compression, background removal, object detection, on-the-fly image transformation and so on, work very well and save meaningful time. Natural language processing for transcription and translation is solid. Auto-generated text descriptions have matured considerably and are, in practice, far more useful than keyword tags alone. I know there are more example of where AI delivers actual value, but this can be discussed in an additional article.

Organisations were not immune to the turmoil.

The broader organisational context was equally difficult. Organisations have been exposed to a wave of experimental AI features layered onto existing DAM platforms, often raising expectations that could not be met. This has left many in a difficult spot. On one hand, there is a constant stream of rapid innovation from companies like Anthropic, OpenAI, and Google, with seemingly “game-changing” updates every week. That creates pressure from leadership to “have AI” in place, which often leads to rushed decisions and frustration when implementations do not deliver the promised value.

Now, however, the situation seems to be changing, with organisations becoming more hesitant to adopt AI at all. The right approach lies somewhere in between. Stay focused on real user needs. If a tool does not solve a clearly defined problem, it should not be implemented. Successful DAM strategies have always started with understanding the problem first. AI is no different. If it helps solve it, great. If not, it is just another tool, not a requirement.

Agentic AI: A New Kind of Requirement

The more consequential shift is the arrival of agentic AI — autonomous software processes that reason and act across systems without human input at each step. But how is this related to DAM platforms? Are we aiming to add yet another AI-powered feature on top of our existing toolkits? And is it anything that clients have asked for? Or is it just functionality that has been implemented because it was technically possible and nobody had thought about its usefulness?

Well, clients haven’t asked for it. However, since DAMs are at the heart of the marketing technology stack, adjusting to technological shifts in connected systems has always been part of a DAM’s DNA.

The moment the impact of agentic AI on DAMs became concrete for me was when I read an article about agentic e-commerce. Here is a very brief outline of what I am talking about. Agentic e-commerce describes a new way of digital shopping, if you will. The idea is this. In your AI tool of choice, say ChatGPT or Claude, you type: “Buy Nike Air Force One Sneakers in white, size US 11, and make sure I get them delivered to my home address by Friday. Costs must be lower than €100 including delivery.” Your AI tool will then spawn an agent that searches online shops to discover the product you are looking for, compares the offerings, evaluates authenticity, and completes the transaction. The next thing you notice is the box arriving by mail on Friday and €89 booked to your card.

Think about what that means for DAM. Generally speaking, in a product data-driven e-commerce environment, PIM systems provide structured product data and DAMs deliver formatted assets with matching metadata: SKU, approval status, channel availability, colour, size, and so on. When an agent compares product offerings, it does not care about the design and layout of a webshop. It does not care about styling or mood imagery. It is a machine after all, and it goes through these basic steps:

-

Find and discover the product

-

Compare search results based on criteria

-

Make a decision to pick the product that best fits the requirements

-

Complete the transaction

What is interesting for us is steps 2 and 3. When the agent evaluates products, it cross-references technical product data against product asset metadata:

Visual descriptors — what is on the product image?

-

view: side

-

background: white

-

scene: lifestyle

-

logo_visible: true

Rights and approval data — agents must know whether an asset can be used:

-

approved_for_channel

-

approved_for_market

-

rights_expiration

-

usage_restrictions

Localisation — both products and assets must support localisation:

-

language

-

market

-

channel

Also, alt text, EXIF data, C2PA provenance signals, product IDs, and how well the asset description matches the product description are all factors taken into account. This list is not complete, it’s just to give you an idea.

The agent then builds a confidence score. If that score falls below a threshold — if the data does not add up — the product is flagged as potentially inauthentic and dropped from consideration.

This only works with genuinely structured data. An asset needs to carry the product ID of the product it depicts. Approval status needs to be a machine-readable field value, not a comment in a notes field. The connection between asset and product record needs to be explicit.

In this model, the DAM becomes a trust layer. Only rights-cleared, brand-compliant, and properly attributed assets should enter the automation stream. Most DAMs can store this type of data. The question is whether organisations have actually maintained it and whether it is readable by agents. Sloppy metadata is no longer just an internal housekeeping problem. It costs you sales.

To sum it up, brands that want to be successful in an agentic e-commerce world, will need realize the following:

-

What agents see when they look at your brand. For an agent, your brand is not your logo or your campaign imagery. It is the completeness and accuracy of your structured product data.

-

The first touchpoint is no longer a page, it is an endpoint Agents do not visit your website. They talk to an API endpoint. They discover, compare and buy with every decision based on data alone.

-

The DAM as a competitive asset, not a filing system Investing in metadata quality today is not a housekeeping task. It is a competitive decision.

MCP: A Smarter Way to Connect Your MarTech Stack

So how do you make a DAM accessible to AI agents — and why does the same answer also solve a much older problem?

The answer is MCP — the Model Context Protocol, an open standard developed by Anthropic. MCP gives any system a standardised way to describe what it can do, so that AI agents can discover and call those capabilities without custom code. In its simplest form, a DAM vendor wraps their existing API in an MCP layer. This exposes its API endpoints to AI agents, and the system immediately becomes connectable to any other MCP-compatible tool in the stack.

Easier said than done, I know.

Essentially, if your DAM data is accessible by a machine, an AI agent in this case, you have laid the groundwork for external tools to connect to your data and use it in new and upcoming e-commerce contexts.

Once you have added an MCP layer to your DAM, you can not only access but also manage your DAM from a prompt inside, say, ChatGPT. This is no longer theory. There are a few vendors out there who have started to show demos of exactly this. You could, for example, ask ChatGPT something along the lines of: “Get me download links for all approved assets for the ‘Summer Campaign 2026’, convert them to an Instagram-optimised format and dimensions, and send me the links by email.” This already works. I have seen live demos from a few vendors.

MCP and the GUI: A Question Worth Asking

This, however, raises something I find genuinely interesting and am not ready to answer definitively: is a text prompt actually a better interface than a GUI?

Once your DAM is agent-accessible, the things you can do are impressive, no doubt. From an engineering perspective it is great and new, but how useful is it really? A well-built DAM GUI with faceted search is also genuinely efficient. When you open a filter panel, you see the metadata values that actually exist in your library. You do not need to remember whether the campaign was filed under “Summer_26_EMEA” or “SS26 Campaign.” You see the options and click. A text prompt shifts that cognitive load back to the user and introduces the kind of ambiguity that a good UI eliminates by design.

My view: for a regular DAM user who knows what they are looking for, a well-designed GUI is still hard to beat. When it comes to batch processing, I can see how the agentic approach may be helpful. It is possible that AI chat interfaces will become the central way humans interact with computers and that any task will be run through a prompt, in which case, yes, it will be unavoidable that your DAM is accessible through one as well.

Generally speaking, the two interfaces solve different problems, and the right answer for most organisations is a DAM that offers both.

MCP Delivers Much More Than Prompted Asset Searches

MCP matters well beyond agentic workflows. If agents are capable of making product purchase decisions (see agentic e-commerce above), they should also be capable of orchestrating data flows between two or more systems.

DAM integration has always been painful. Every connection to a PIM, a CMS, or a project management platform has historically been a bespoke engineering project. Someone has to understand two different APIs, write glue code, and maintain it every time either platform changes. Most organisations end up with a short list of integrations that justified the cost, and a much longer list that did not.

Automation middleware tools improved this picture — you could connect systems visually, without writing code. But these tools still work through point-to-point connectors, one for each pair of applications. The logic is linear and static: if X happens in system A, do Y in system B. It does not adapt, it does not reason, and when a platform updates its API, the connector may break if the vendor does not release an updated connector alongside — or better, prior to — the product launch.

MCP as an Integration Interface

MCP is a step change beyond this. Instead of building bilateral connections between specific application pairs, every platform publishes a single MCP server, which becomes a standardised description of its capabilities. Any MCP-compatible system can read that and immediately know what the platform can do and how to call it. Without going into too much technical detail, exposing available tools is part of the MCP specification itself.

A DAM, a PIM, a CMS, and a project tool, each with their own MCP server, become composable without a single point-to-point connector between them. An agent connects to all four, understands what each can do, and orchestrates across them dynamically. New tools added to the stack are immediately available to every connected agent. The maintenance burden of managing dozens of individual connectors largely disappears. The biggest benefit, in my view, is the speed and quality with which these integrations can be deployed. You still need to understand how software works and have a solid grasp of the architecture and the user perspective, but that is it. You can get quite far without requiring any engineering resources.

For DAM specifically, a well-built MCP server exposes genuine operational depth: search by metadata combinations, retrieve assets in specified formats, check approval status, filter by campaign, return download links, update field values. An agent can pull approved assets from the DAM, attach them as product data assets to the product structure in your PIM, then export the data to the e-commerce platform and update task status in a project tool, all in a single automated, scheduled sequence, without a human involved at each handoff.

This is the theory. Again, I have seen live demos from vendors showing exactly this, and it is impressive. I think it will be, well, a game-changer. That said, let us not forget the downsides.

Running tasks through AI models is costly over time and, more importantly, consumes a significant amount of natural resources. AI data centres are projected to use ten times the electricity in 2030 compared to 2023 (source: Energy and AI report, www.iea.org)

Not all MCP layers are created equal. The devil is in the details. Is the vendor simply exposing some read-only endpoints as MCP tools? That may be enough for a sales demo, but is it enough if you are planning to build agentic integrations around it? If that is what you are planning, it is worth checking whether the vendor has implemented guardrails or intelligence around their MCP tools, rather than simply forwarding incoming agent requests directly to the internal API.

And let us be honest, we do not yet know if any of this works at scale.

What This Means for Your DAM Strategy in 2026

On AI features — stay focused on real user needs. If a tool does not solve a clearly defined problem, it should not be implemented. Successful DAM strategies have always started with understanding the requirements first — it is no different with AI-powered tools. If it helps solve a problem, great. If not, it is just another tool, not a requirement.

On agentic readiness — ask your DAM vendor directly about their MCP roadmap. If you manage product assets for e-commerce, this is quite urgent. The agents driving the next generation of product discovery will need clean, structured, machine-readable metadata. Your DAM is either ready to serve them or it is not.

On integrations — even outside agentic commerce, MCP simplifies MarTech integration in ways worth taking seriously now. Treat MCP support as a selection criterion, not a future consideration.

On the GUI — you do not have to choose. A strong UI for daily users and an MCP layer for automated workflows are complementary, not competing.

Conclusion

AI in DAM has grown up. The experimental phase is behind us. What we are navigating now is a shift from AI as a feature to AI as part of the fundamental infrastructure through which assets are managed, governed, and accessed.

Agentic AI is the sharpest edge of that shift — driven by e-commerce automation, workflow orchestration, and the straightforward fact that software agents are increasingly the systems requesting your assets. Making your DAM connectable, structured, and metadata-complete is no longer just good practice. It is a competitive requirement.

MCP will be central to how this plays out — not because a prompt field will replace a DAM interface, but because it may become the standard channel through which agents, integrations, and automated workflows interact with your asset library.

The organisations that get ahead of this are the ones with clean metadata, well-governed libraries, and DAM vendors who have already done the work. Now is a good time to find out which camp you are in.

Frequently Asked Questions

What does agentic AI mean for Digital Asset Management?

Agentic AI refers to autonomous software processes that act across systems without human input at each step. For Digital Asset Management, this means AI agents, not just human users , are increasingly browsing the systems requesting assets and metadata. A DAM must be structured, machine-readable, and API-accessible to serve them.

What is MCP and why does it matter for DAM?

MCP, or Model Context Protocol, is an open standard developed by Anthropic that gives any system a standardised way to describe its capabilities so that AI agents can discover and call them without custom code. For DAM platforms, an MCP layer exposes existing API endpoints to AI agents and makes the system immediately connectable to any other MCP-compatible tool in the MarTech stack — including PIM, CMS, and project management platforms. DAM vendors who have implemented MCP are already demonstrating live workflows where agents retrieve, convert, and distribute approved assets entirely without human involvement at each handoff.

How does agentic e-commerce affect DAM and product asset metadata?

In agentic e-commerce, an AI agent autonomously discovers products, compares offerings, and completes transactions — without a human browsing a website. When the agent evaluates products, it cross-references structured product data against asset metadata, including visual descriptors, approval status, rights expiration, channel availability, and localisation fields. Assets that carry incomplete or unstructured metadata are flagged as potentially inauthentic and dropped from consideration. For brands managing product assets in a DAM, this means sloppy metadata no longer just creates internal inefficiency — it costs sales.

What should organisations ask their DAM vendor about MCP readiness?

Ask whether the vendor has implemented a full MCP layer or is simply exposing a small set of read-only endpoints for demo purposes. A production-ready MCP implementation should support metadata search, asset retrieval in specified formats, approval status checks, field value updates, and campaign-level filtering — not just basic asset downloads. It should also include guardrails and logic around how incoming agent requests are handled, rather than forwarding them directly to the internal API. For organisations managing product assets for e-commerce, MCP readiness should be treated as a DAM selection criterion today, not a future consideration.

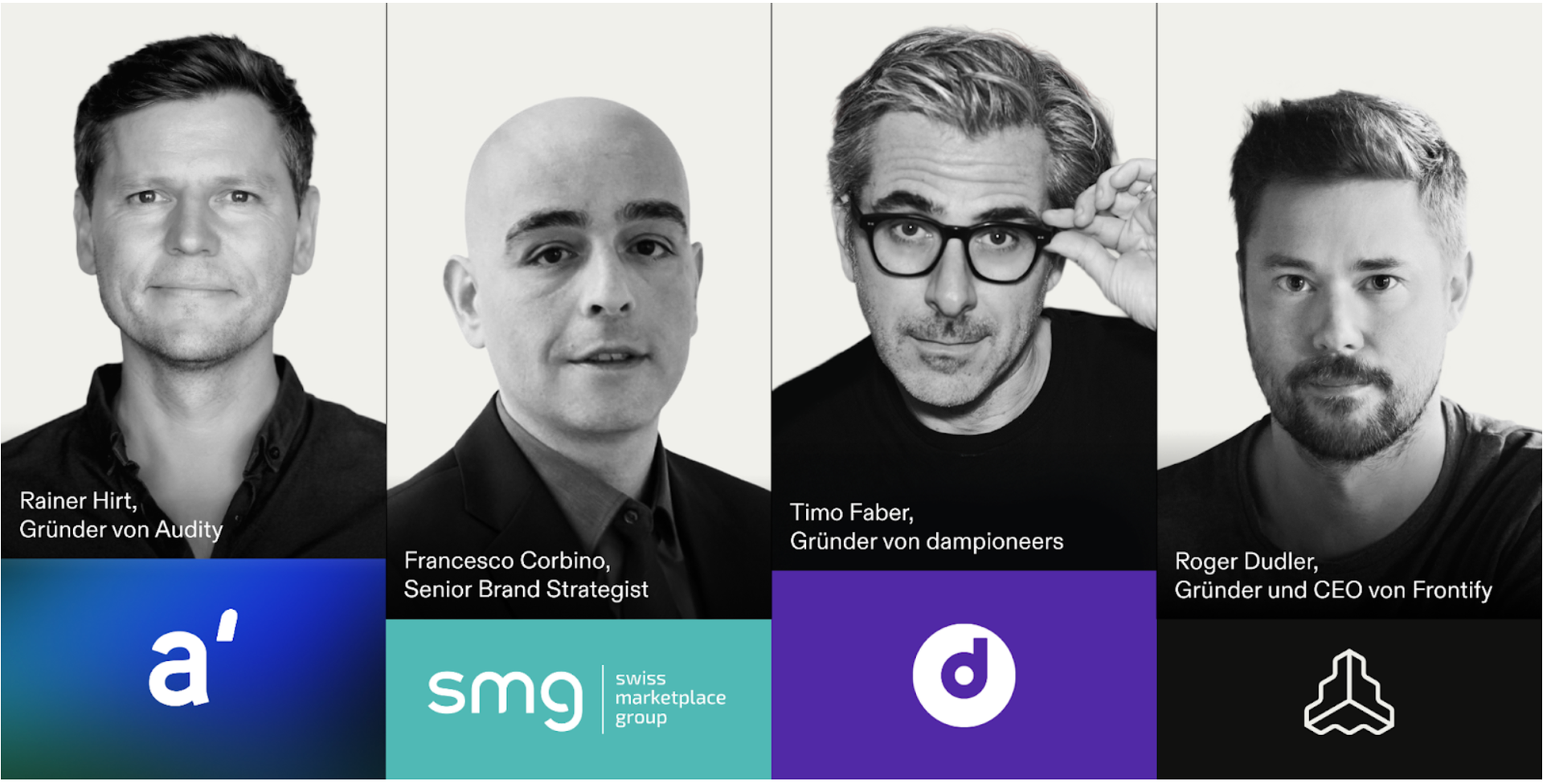

Timo Faber

Timo Faber is an independent Digital Asset Management consultant and founder of dampioneers, based in Munich. With over two decades in the DAM industry, including as Product Manager at Xinet, a leading DAM vendor later acquired by Northplains, he helps organisations across the DACH region, Europe and the US select, implement, integrate and optimise DAM systems. Fully vendor-agnostic.